Andrew Bolt Herald Sun Dec 6 2009

I’ve wondered whether Climategate scientist Tom Wigley, an Australian, finally choked on all the fraud, fiddling and coverups he was witnessing from fellow members of his Climategate cabal. Steven Hayward points out that many other Climategate scientists privately had trouble swallowing the practices of their colleagues:

In 1998 three scientists from American universities–Michael Mann, Raymond Bradley, and Malcolm Hughes–unveiled in Nature magazine what was regarded as a signal breakthrough in paleoclimatology–the now notorious “hockey stick” temperature reconstruction (picture a flat “handle” extending from the year 1000 to roughly 1900, and a sharply upsloping “blade” from 1900 to 2000). Their paper purported to prove that current global temperatures are the highest in the last thousand years by a large margin–far outside the range of natural variability. The medieval warm period (MWP) and the little ice (LIA) age both disappeared.

(more…)

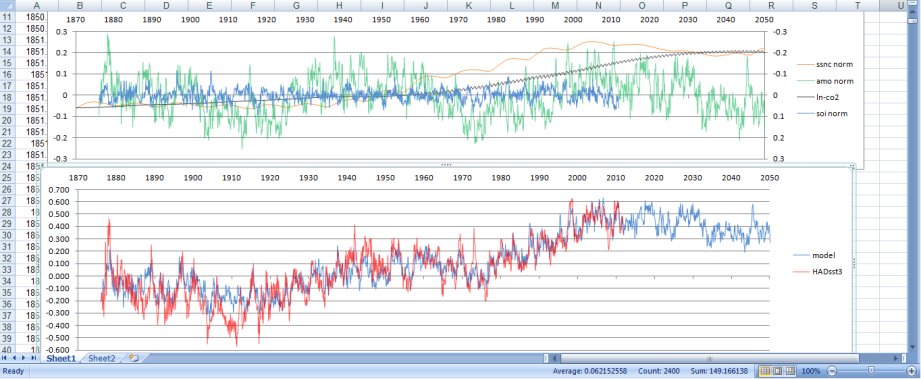

Jamal Munshi

Jamal Munshi